HEADLINE OPTIONS (pick your weapon):

You’ve heard of companies using AI to screen resumes. You’ve heard of bots scheduling interviews.

But this?

This might be the first time someone got rejected… because a manager asked AI if their hair was acceptable.

Yeah. Seriously.

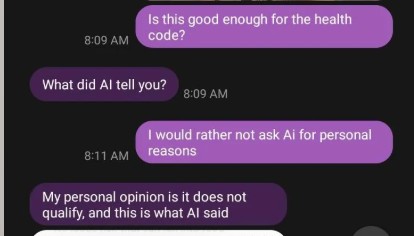

It started simple.

A job applicant trying to follow the rules asked a completely normal question:

“Am I allowed to use a hairnet? I don’t want to cut it shorter.”

Reasonable, right?

Instead of giving a clear, human answer—like literally every manager on earth has done since the beginning of time—the response came back cold:

“We do not allow hairnets… we will remove your application.”

Wait… what?

No suggestion.

No clarification.

No “just wear a hat.”

Just straight to rejection.

But it gets worse.

When the applicant asked why a hairnet—an actual industry-standard solution—wasn’t allowed, the explanation didn’t come from a handbook, a policy, or even common sense.

It came from… AI.

“This is what AI said.”

Let that sink in.

A real human being, making a real hiring decision… outsourced it to a chatbot.

And treated the result like it was company policy carved into stone.

Here’s the kicker:

- Hairnets = “not allowed”

- Kitchen rules = “don’t apply to you”

- Hair still “not acceptable”

- Solution offered = none

So what exactly was the right answer?

Nobody knows.

Not the applicant.

Not the manager.

Apparently not even the AI.

This isn’t about hair.

It’s about what happens when people stop thinking for themselves and start hiding behind “AI said so.”

Because here’s the truth:

AI can give suggestions.

AI can give guidance.

But AI should never be the final boss of basic human decisions—especially when someone’s job is on the line.

Not the policy.

Not the haircut.

Not even the rejection.

It’s this:

A manager who can’t explain a rule without pulling out a screenshot… probably shouldn’t be enforcing it.

Images from Reedit.